Guide AI with sketches using T2I-Adapter, an alternative to ControlNet

The field of sketch-to-image or creating AI images based on sketches is growing fast and with them, new tools are arising. The brand new T2I-Adapter was originally released in February 2023 and is now available with the new SDXL (Stable Diffusion XL) text-to-image model.

On this article we will explore:

- What is T2I-Adapter and how does it work

- How to try the adapter for free

- Sketch-to-image online tools

- Scribble Diffusion

- Stable Doodle

- RenderAI

- Usage and Limitations

- Conclusion

Generate images using a sketch and the prompts “Cat with a jeans jacket, ‘Digital Art’ Style” and “Cute owl, ‘Origami’ Style” by Clipdrop

What is T2I-Adapter and how does it work

The use of adapters adds additional input conditions to the process of AI image generation, such as sketches, segmentation maps, or key poses. T2I-Adapter is a condition control solution that allows for precise control supporting multiple input guidance models.

The T2I-Adapter network provides supplementary guidance to the pre-trained text-to-image models such as the text-to-image SDXL model from Stable Diffusion. By using it, the algorithm can understand outlines of sketches and, after providing a prompt, output new stunning results. It’s good to notice that the T2I-Adapter (or ControlNet) is an additional guide to the process. That means that works-on-top by keeping the original large text-to-image models unchanged.

Drawing by Stability AI

How to try the adapter for free

You can access all the information in the public repository of TencentARC T2I-Adapter. If you want to try the Sketch Adapter space, is available for free in the Hugging Face T2I-Adapter Space.

Example of how to Use the T2I-Adapter by TencentARC on HuggingFace

Sketch-to-image online tools

There are several tools available for sketch-to-image generation. Some offer free tiers, but in most cases, a subscription is needed after a few tries due to the high cost of servers running artificial intelligence.

Scribble Diffusion

Scribble Diffusion lets you turn a sketch into a refined image using AI. Is an open source project from Replicate. It’s an incredible tool for playing and exploring the possibilities of AI image generation. It uses Stable Diffusion model with ControlNet.

While Scribble Diffusion is free, the capabilities and features are very limited. For example, you can only draw the sketch using the online user interface and there are no options to control the results besides the given prompt.

Image of Scribble Diffusion app user interface

Stable Doodle

Stable Doodle is geared toward professionals and novices, regardless of their familiarity with AI tools. It has a user-friendly interface that enables designers, illustrators, and other professionals to draw ideas as sketches that can be immediately implemented into works. It uses Stable Diffusion model with T2I-Adapter.

Stable Doodle allows for artistic customization, providing 14 styles to choose from via Stable Diffusion XL. Styles range from realistic (photography) to cinematic to creative (fantasy art and origami).

Image of Stable Doodle app user interface

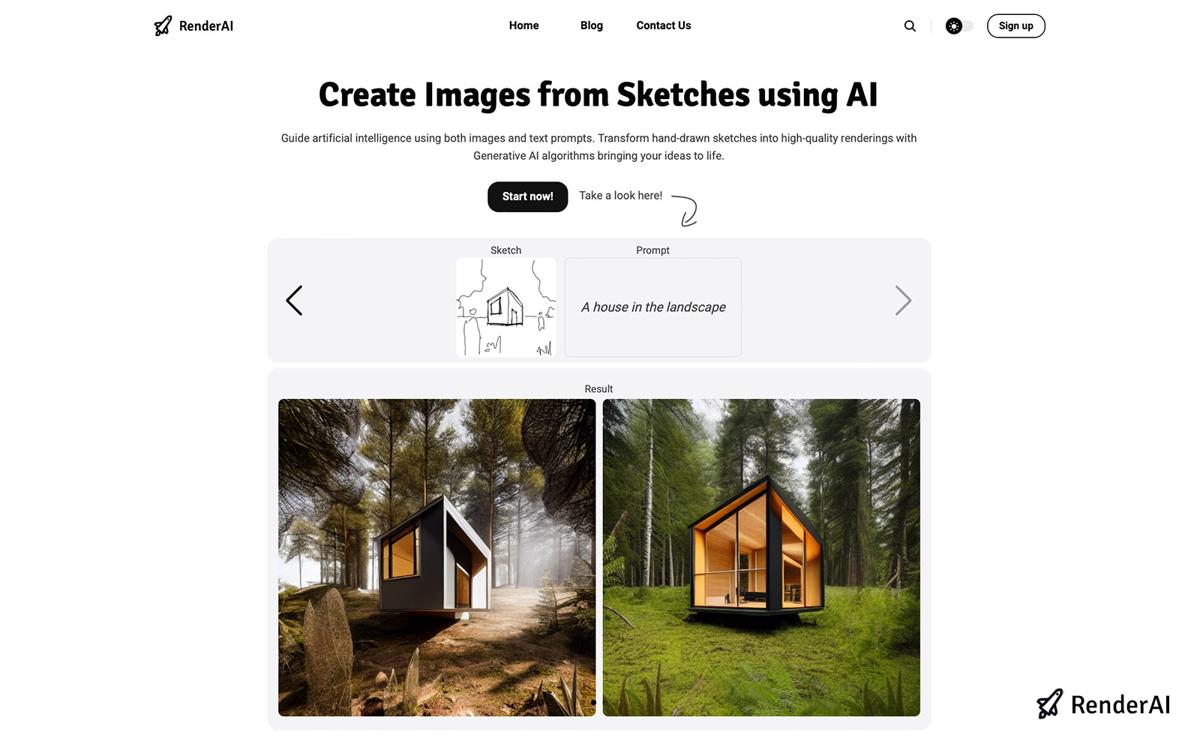

RenderAI

RenderAI is an innovative tool that allows users to guide artificial intelligence using both images and text prompts. You can transform hand-drawn sketches into high-quality renderings with Generative AI algorithms. You can draw your sketches directly online, or upload them from your computer or tablet.

Image of RenderAI app

Usage and Limitations

Although sketch-to-image examples demonstrate impressive capabilities, it is important to recognize their inherent limitations. This AI generator uses algorithms to analyze the outline of an image to generate a visually pleasing and coherent result. The final output depends on the initial drawing and description provided by the user, and the tool’s accuracy may vary depending on the scene’s complexity.

Conclusion

In wrapping up, the introduction of the T2I-Adapter represents a pretty exciting leap forward in the world of AI-generated images. These tools enable AI models to take into account things like sketches, giving us a lot more say in how our AI-created images turn out.

One cool application to control image generation with sketches is RenderAI, working alongside the Stable Diffusion XL text-to-image model. While there are other tools like Scribble Diffusion and Stable Dodle also trying to tackle sketch-to-image.

Keep in mind that the whole sketch-to-image process does have its limits; the outcome depends on how you start, how complex the scene is, and how the models move forward in time. So, as this field keeps evolving, the T2I-Adapter looks like a neat way to steer AI image creation down a more precise and controlled path, leaving its mark on everything from art to practical applications.

—

References:

- Based on the article of Stability AI Clipdrop Launches Stable Doodle

- AI app images from Stable Doodle, Scribble Diffusion, and RenderAI

About the Author:

This article was written by Francisco. Architect, Visual Designer & Founder of RenderAI.